Object Tracking

The tracking module exploits the sparse registration results generated by the registration module above and embeds it in a particle filter framework to provide accurate tracking of moving objects in video. Robust multi-object tracking can be very difficult due to reasons such as presence of noise, occlusion, fast camera motion, low-resolution image capture, varying viewpoints, and illumination changes.

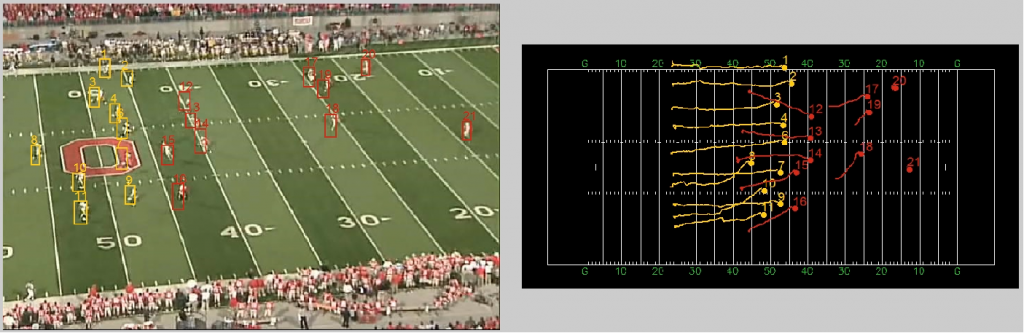

The tracking module uses a novel particle filter based tracking algorithm that uses both object appearance information (e.g. color and shape) in the image domain and cross-domain contextual information in the reference domain to improve object tracking; tracking performance would suffer due to camera motion in the image domain without incorporating the cross-domain contextual information. In the reference domain, the effect of fast camera motion is significantly alleviated since the underlying homography transform from each frame to the reference domain can be accurately estimated. Contextual trajectory information (intra-trajectory and inter-trajectory context) is used to further improve the prediction of object states within a particle filter framework. Here, intra-trajectory contextual information is based on history tracking results in the reference domain, while inter-trajectory contextual information is extracted from a compiled trajectory dataset based on tracks computed from videos depicting the same type of video.

Find out more about our tracking methods in our publications page.