Research

We assume that any input video is captured by a non-translating camera which can undergo camera motion (panning, tilting, and zooming). This motion is common to non-static video, esp. broadcasting of sports events and surveillance footage captured by PTZ (Pan-Tilt-Zoom) cameras. Our current research can be applied to any non-translating PTZ video for which tracking object motion and analyzing their patterns would be beneficial.

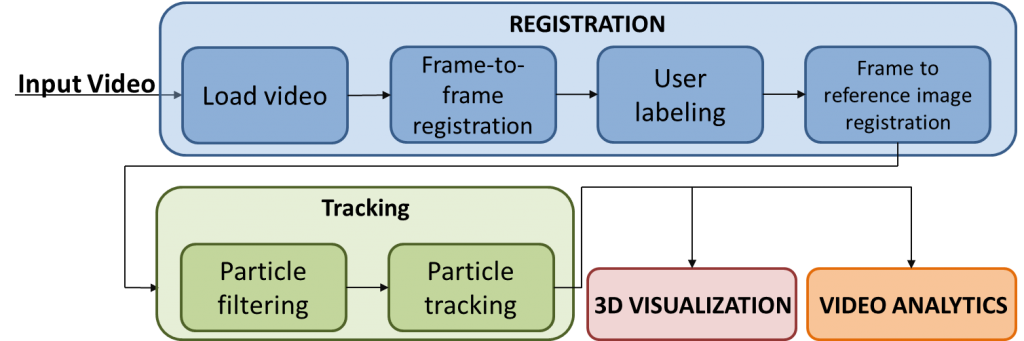

This project uses image processing and computer vision techniques to provide a computational framework suitable for the semantic analysis of complex activities present in video. The framework consists of four main modules: (1) registration, (2) tracking, (3) video analytics, and (4) 3D dynamic visualization. The four modules create a framework that provides analytical capabilities for automatically detecting, recognizing, and understanding important events and activities in a given video. In the case of our sports application “AutoScout,” the system allows coaches to quickly understand and interpret statistics from the large number of video clips in their database. The system can also extract and understand important patterns or strategy of teams from multiple videos based on players’ trajectories, ultimately allowing coaches and players to learn strategies and formulate a plan based.

The framework provides the solid foundation for additional extension such as retrieval of similar events or activities. The framework has been designed to accomodate all types of video (e.g. sports, security surveillance, broadcast, etc.), even though currently the main application lies in the sports domain.